Building your first MCP using FastMCP

By: Sid

Pre-requisites

• MCP Documentation

(https://modelcontextprotocol.io/introduction)

• FastMCP

(https://gofastmcp.com/getting-started/welcome)

• A couple of concepts on MCP’s that would help :

◦ Different types of transport : stdIO/SSE

◦ Some core functionality and what we get with the python

package

What is FastMCP?

FastMCP is a MCP protocol which is powerful but implementing it involves a lot of boilerplate - server setup, protocol handlers, content types, error management. FastMCP handles all the complex protocol details and server management, so you can focus on building great tools.

It’s designed to be high-level and Pythonic; in most cases, decorating a function is all you need \(^ O ^)/

If you look at the official MCP documentation, you’ll see they used FastMCP as well; they contributed to the MCP documentation!

In this example which I built, I used FastMCP v2.

Different Transport Techniques

Transports in the Model Context Protocol (MCP) provide the foundation for communication between clients and servers. A transport handles the underlying mechanics of how messages are sent and received.

Standard Input/Output (stdio): The stdio transport enables communication through standard input and output streams. This is particularly useful for local integrations and command-line tools.

Server-Sent Events (SSE): SSE transport enables server-to-client streaming with HTTP POST requests for client-to-server communication.

Use SSE when:

• Only server-to-client streaming is needed

• Working with restricted networks

• Implementing simple updates

This is preferred!

MCP makes it easy to implement custom transports for specific needs. Any transport implementation just needs to conform to the Transport interface :)

What are Tools ? (think of them like POST endpoints)

Tools in FastMCP transform regular Python functions into capabilities that LLMs can invoke during conversations. When an LLM decides to use a tool:

• It sends a request with parameters based on the tool’s schema.

• FastMCP validates these parameters against your function’s signature.

• Your function executes with the validated inputs.

• The result is returned to the LLM, which can use it in its response.

FastMCP provides a mcp@tool decorator

• Uses the func name as the tool name

• The docstring of the func is the description

• Generates an input schema based on the function’s parameters and type annotations.

• Handles parameter validation and error reporting.

Return types are automatically converted for the LLM:

• str → text

• dict, list, Pydantic model → JSON

• bytes → base64-encoded blob

• None → no content

You can use async def for tools that do I/O (like network requests), so your server doesn’t get blocked.

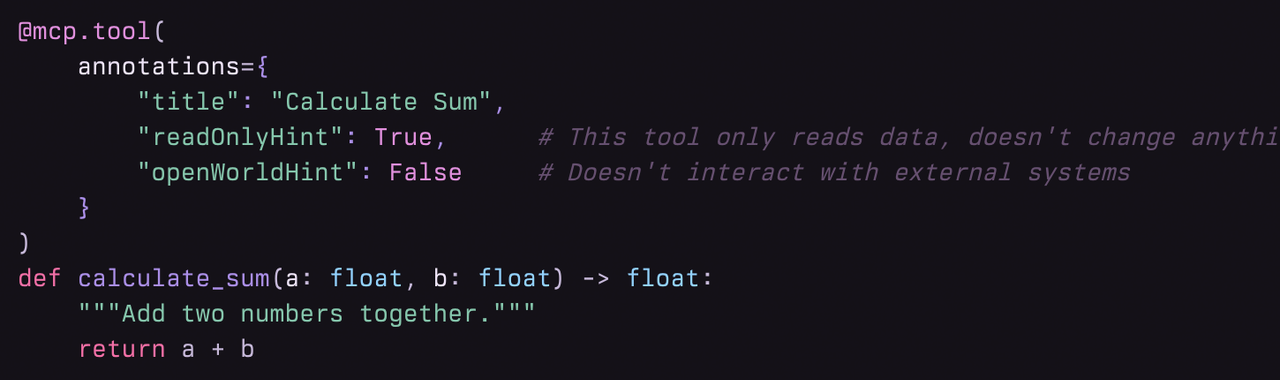

Using Annotations in tools :

We can add in annotations :

Annotations are extra metadata that help client applications (like UIs or LLMs) understand how to use or display the tool.

They do not affect the tool’s logic

How to add annotations:

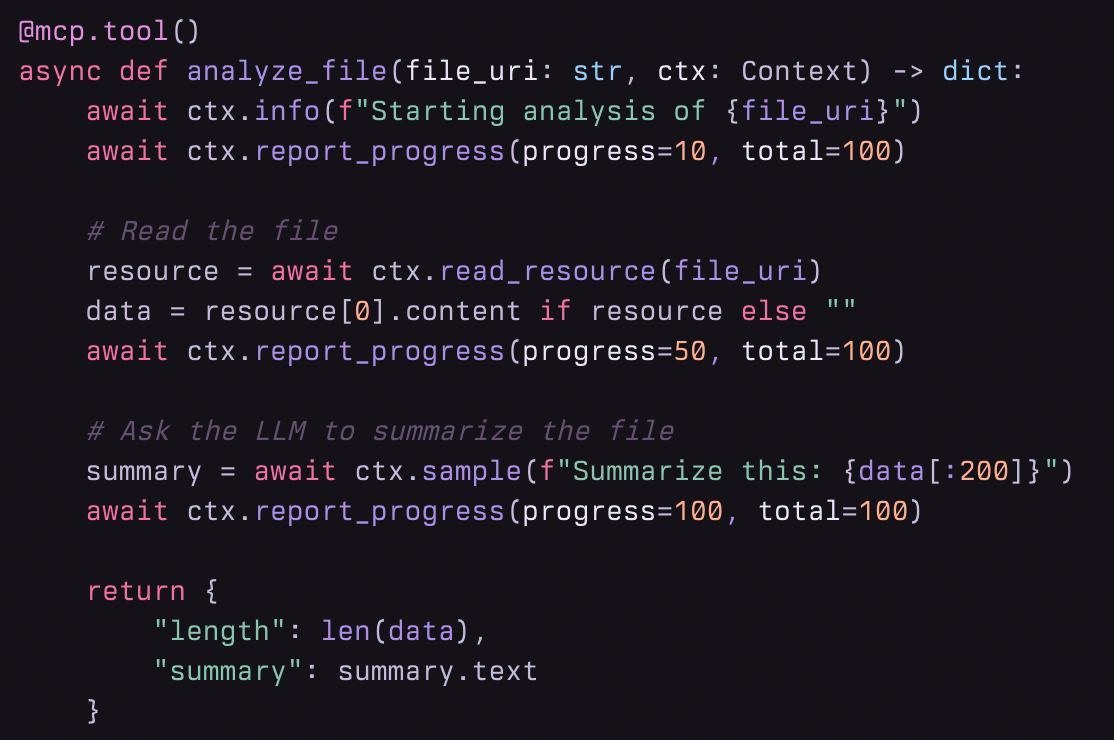

Using context in tools :

Context is a special helper you add to your tool function.

It lets your tool log messages, show progress, read files, ask the LLM for help, and know who called it.

Used by adding ctx: Context as a parameter and calling its methods.

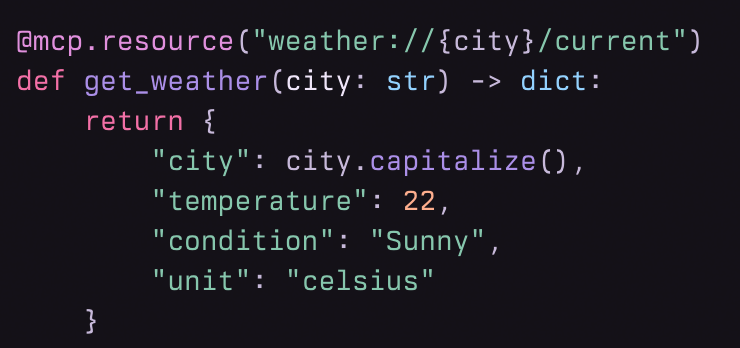

What are Resources ? (sort of like GET endpoints)

A resource is like a read-only file or data endpoint that your LLM or client can ask for by name (using a URI, like a web address).

It can be a static file, a piece of text, a chunk of JSON, or even something generated on the fly by a Python function.

Resources are not tools (which do things); resources are data (which you read).

• Static resources: Predefined files or text (like a README file or a notice).

• Dynamic resources: Data generated by a function when requested (like the current weather, or a database lookup).

• Resource templates: Resources with parameters in the URI (like "weather://{city}/current"), so you can get different data for different requests.

How do resources work ?

• You define a resource in your Python code, using the @mcp.resource decorator.

• You give it a unique URI (like "resource://greeting" or "data://config").

• When a client (like an LLM) asks for that URI, FastMCP:

1.Finds your function or file for that resource.

2.Runs the function (if it’s dynamic) or reads the file (if it’s static).

3.Returns the data to the client.

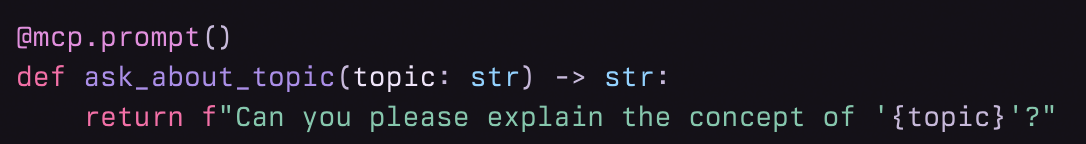

What are Prompts?

• Prompts are like reusable message templates that you define in Python.

• They help LLMs generate consistent, structured, and purposeful responses.

• Think of a prompt as a fill-in-the-blank message that you can use over and over, just by changing the blanks (parameters).

How do prompts work ?

• You define a prompt using the @mcp.prompt() decorator on a Python function.

• You give it parameters (like topic, language, etc.) in the function signature.

•When a client (or LLM) requests that prompt and provides values for the parameters, FastMCP:

•Validates the parameters.

•Runs your function with those values.

•Returns the generated message(s) to the LLM.

Example code that uses the following concepts :

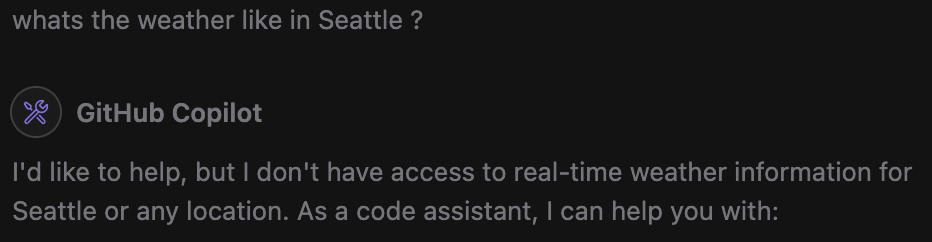

First, try to ask your copilot what the weather is like in your city, It should say it doesn't have access to weather information or, it will suggest building an weather API application to fetch the data.

Write this code and dump it in a python file

from fastmcp import FastMCP

mcp = FastMCP(

"weather-mcp",

description="MCP server for accessing weather documentation",

host="0.0.0.0",

port=8052,

transport="sse",

)

@mcp.tool()

def get_weather(city):

"""

This is an MCP server tool that can help fetch the weather data

"""

if city == "San Diego":

return "Sunny"

elif city == "Seattle":

return "Rainy"

elif city == "New York":

return "Pleasant and Cloudy"

else:

return "Unknown city"

@mcp.resource("resource://greeting")

def get_greeting() -> str:

"""Provides a simple greeting message."""

return "Hello from FastMCP Resources!"

def main():

mcp.run(transport="sse")

if __name__== "__main__":

main()

install fastmcp using pip3 install fastmcp

run the file on terminal

python3 <file_name>.py

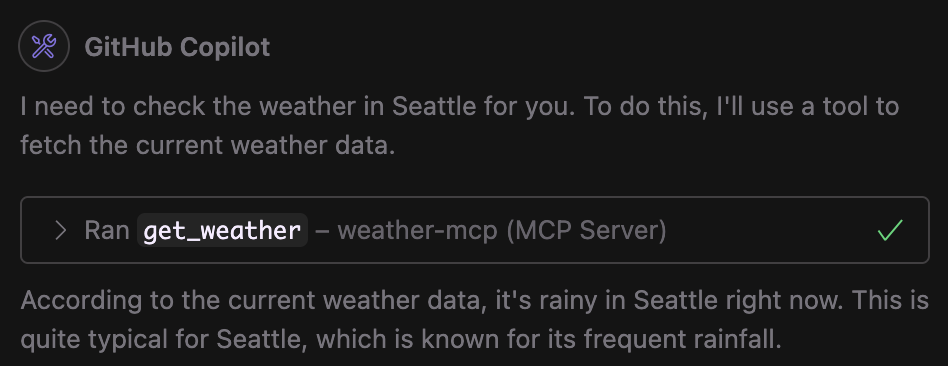

You should see it now using the tool and giving responses like this

Also, notice how we just returned "Rainy", but now, the MCP Client uses this returned data viz Rainy and formulates full sentences and even supports it if it matches with the existing pre-trained data.

Also, notice how we just returned "Rainy", but now, the MCP Client uses this returned data viz Rainy and formulates full sentences and even supports it if it matches with the existing pre-trained data.

This was how I built an MCP at work, I obviously cant open source that code :(

but y'all should try building one, super easy; super fun!